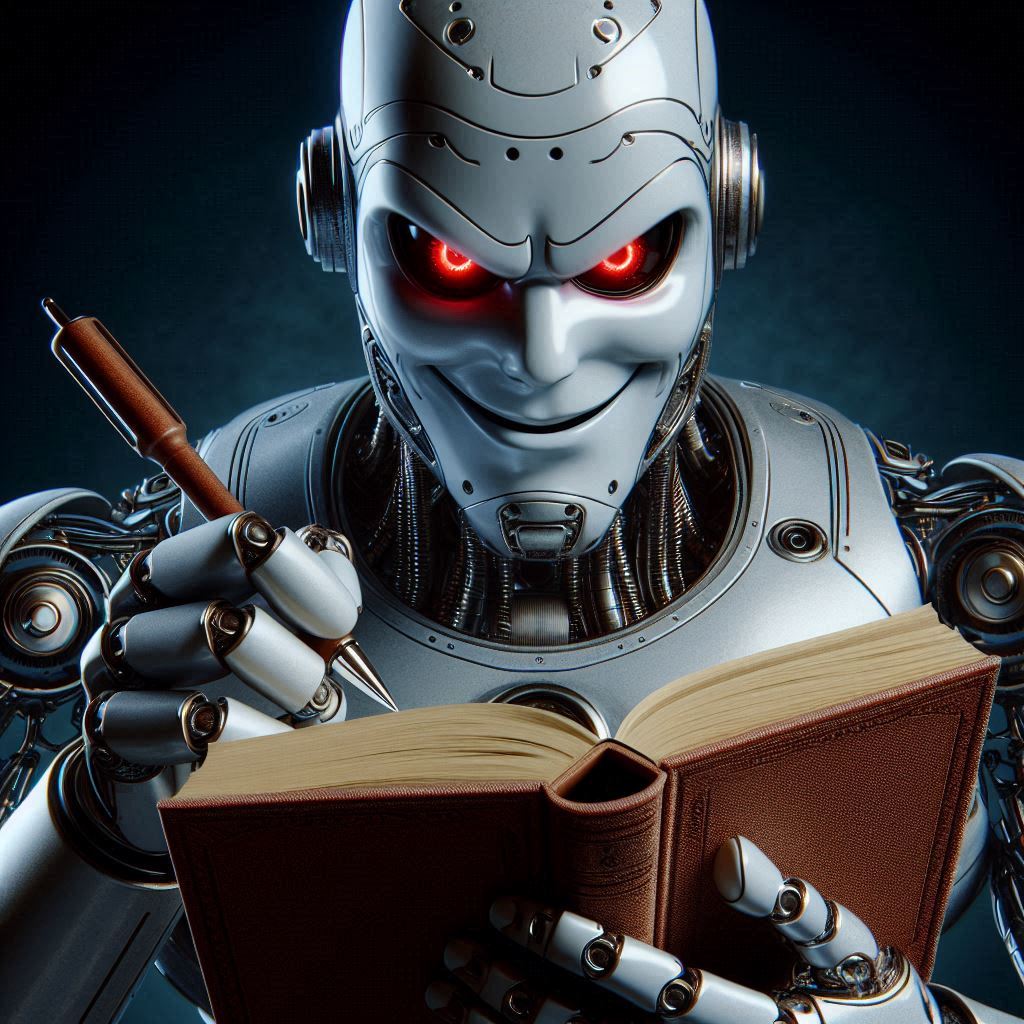

Ladies and gentlemen, gather ‘round, for the digital age has bestowed upon us a modern-day tragedy: Wikipedia, our beloved free encyclopedia, is under siege—not by hackers, not by rogue editors, but by an army of insatiable AI scrapers.

Yes, you heard that right. The same Wikipedia that has bailed you out in last-minute research projects, helped you win pub trivia, and let you fall down an endless rabbit hole from “Roman aqueducts” to “the history of cat memes”—that Wikipedia—is struggling under the weight of AI bots vacuuming up its data like a Dyson on steroids.

The AI Boom… and Wikipedia’s Data Bill Doom

With artificial intelligence growing faster than your unread emails, companies are scrambling to train their models on the vast ocean of human knowledge. And what better place to feast than Wikipedia? It’s free, it’s comprehensive, and it doesn’t come with a paywall tantrum. Unfortunately, this massive, automated information buffet is causing Wikipedia’s servers to groan under the pressure, forcing the nonprofit to shell out for more infrastructure just to keep the lights on.

The Wikimedia Foundation, the force behind Wikipedia, recently raised the alarm, saying that automated requests for content “have grown exponentially.” Translation: AI scrapers are hitting Wikipedia so hard that it’s becoming a costly nuisance.

To put it bluntly, Wikipedia is built to handle viral celebrity scandals, global elections, and sudden bursts of nerds frantically updating the page on black holes every time a new space discovery drops. But AI bots? These relentless knowledge vacuums are a different beast entirely, generating unprecedented levels of traffic that are stretching resources thin.

AI Scrapers: “We’ll Take All of It, Please.”

Here’s the kicker: these bots aren’t just nibbling on a few articles for a snack; they’re scraping Wikipedia dry like a swarm of digital locusts. The worst part? They don’t even say thank you. The result: increased bandwidth costs, more server strain, and a growing headache for the Wikipedia team.

So, what’s the response? Wikipedia’s managers are slamming the brakes on rogue AI crawlers by implementing “case-by-case” rate limiting—essentially, they’re putting these bots on a strict data diet. Some have even been banned outright. But this game of whack-a-bot is far from over, and the Wikimedia Foundation is now looking to its community for ideas on how to better filter out AI-generated traffic.

The Ironic Twist

The whole situation is drenched in irony. Wikipedia, which has been freely offering knowledge to the world for decades, is now being milked dry by companies trying to create AI that will, in turn, summarize and regurgitate Wikipedia’s own knowledge. It’s the ouroboros of the information age—AI eating its own tail, except the tail is Wikipedia’s bandwidth bill.

So, what can we do? Well, for starters, you could donate a few bucks to Wikipedia the next time that friendly little banner pops up (instead of closing it for the tenth time). Or, if you happen to be in charge of an AI company’s scraping division—maybe, just maybe, dial it down a notch before Wikipedia goes full paywall on us.

Because let’s be real—if Wikipedia ever goes down, how else are we supposed to settle heated debates about the height of the Eiffel Tower at 3 AM?

TL;DR: AI bots are overloading Wikipedia with automated data requests, making it costly to maintain. Wikipedia is now cracking down on AI scrapers, but the fight for bandwidth is just beginning. The moral? Maybe AI should learn some digital manners before scraping the knowledge economy dry.